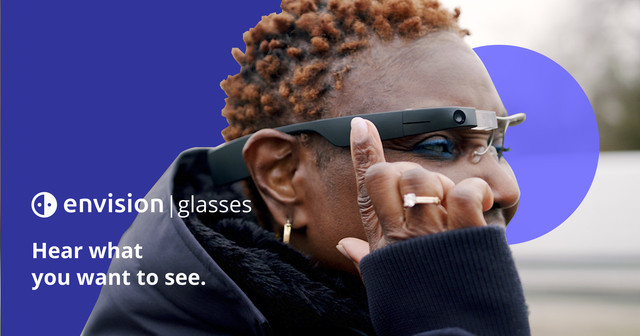

Envision Glasses For The Blind Can Aid Navigation; Scan Faces, Read Documents, And A Lot More

Envision smart glasses are perhaps one of the most impactful products launched in recent times. They use artificial intelligence to assist people who are blind or visually impaired in better understanding their surroundings.

The glasses can scan objects, people, and text using a small camera on the side and then relay that information via a small built-in speaker. For example, Envision can tell you if someone is approaching? Or describe what’s in a room.

Check Google Files Trademark For ‘pixel Watch’

Based On Google Glass Enterprise Edition

Basically, the new Envision Glasses are based on Google Glass Enterprise Edition which was launched in 2013. (Yes, Google Glass is still alive and well.) Google unveiled these smart glasses in 2013, touting them as a way for users to take calls, send texts, take pictures, and look at maps right from the headset, among other things.

However, after a limited – and ultimately unsuccessful – release, they were never available on store shelves.

Check Apple iPhone 14 leaks

A few years later, Google began developing an enterprise edition of the glasses, on which Envision is based. Because of their wearable nature, they are ideal for capturing and relaying information as the user sees it.

The Goal Of Envision

There are a few other apps designed to assist people who are blind or have low vision, such as Google’s Lookout app, which can recognize food labels, locate objects in a room, and scan documents and money. The goal of Envision, on the other hand, is to make those experiences more intuitive.

The headset design frees up people’s hands, allowing them to hold a cane or walk a dog more easily, and the camera is conveniently located right next to your eyes, eliminating the need to hold a phone up to scan your surroundings.